The companies that fail at AI won’t fail because they picked the wrong model. They’ll fail because nobody was in charge of the decisions that mattered most.

Every week, a new survey confirms the same pattern: organizations are rolling out AI tools faster than they are building the structures to govern them. Pilots multiply. Use cases expand. Vendors are onboarded. And somewhere in the middle of all that momentum, critical questions go unanswered — who is responsible when an AI system makes a consequential mistake? Who approved this model for this task? What data was it trained on, and is that data allowed to be used here?

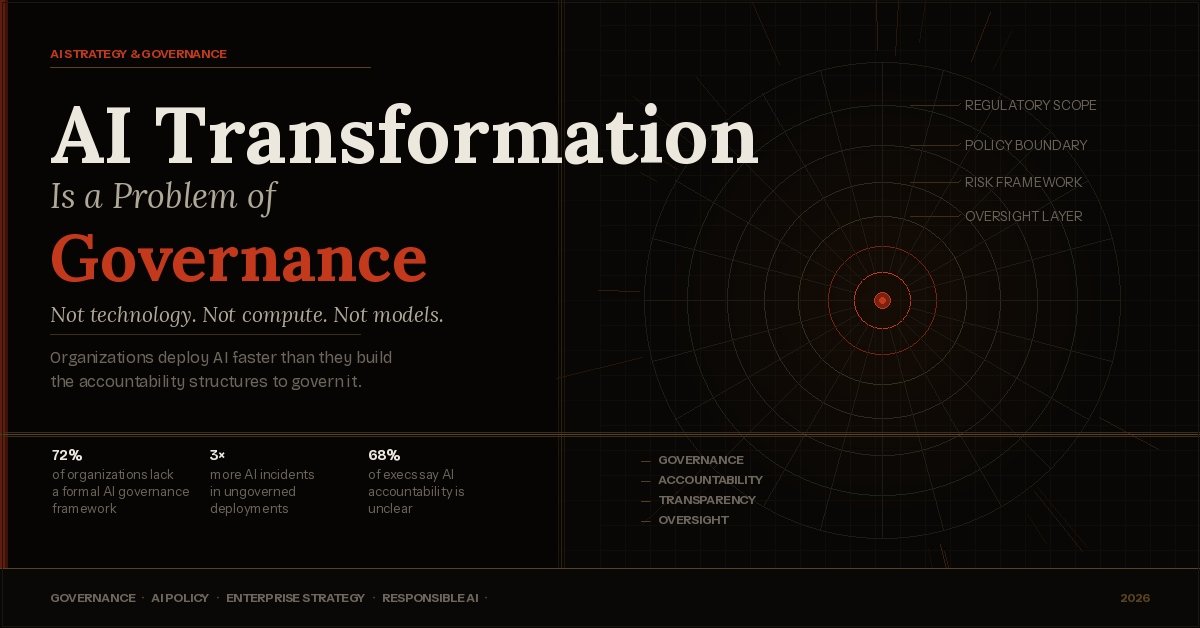

These are not technical questions. They are governance questions. And the growing evidence is that AI transformation is, at its core, a problem of governance — not compute power, not model quality, not integration complexity.

This article explains what that means, why it matters in today’s AI landscape, and what organizations can do about it.

What “AI Governance” Actually Means

Governance, in the context of AI, is the set of policies, processes, roles, and accountability mechanisms that determine how AI systems are developed, deployed, monitored, and retired within an organization or society.

It covers a wide range of questions:

- Who has authority to deploy an AI model for a specific use case?

- How are AI-generated outputs reviewed before they affect customers or decisions?

- What data can be used to train or fine-tune a model — and what is off-limits?

- How is bias detected, measured, and corrected in live systems?

- When something goes wrong, who is accountable — the developer, the vendor, or the business unit?

- How are employees informed when AI is involved in decisions that affect them?

Notice that none of these questions are answered by better algorithms. They require organizational decisions, legal structures, ethical frameworks, and human oversight — which is exactly what governance provides.

Key Distinction

Technology provides capability. Governance determines whether that capability is used responsibly, fairly, and in alignment with organizational values and legal requirements. Without governance, capability becomes liability.

Why AI Transformation Fails Without Governance

The failure modes of AI transformation are remarkably consistent across industries. They rarely look like a model that doesn’t work. More often, they look like a model that works fine — but nobody was managing the consequences of that.

The Gap Between Deployment Speed and Oversight Capacity

Modern AI tools are fast to deploy. A team can integrate a large language model into a customer service workflow in a matter of days. What takes much longer — sometimes years — is building the oversight structures that allow an organization to trust that system in production.

When deployment speed outpaces oversight capacity, organizations accumulate what might be called “governance debt” — a backlog of unreviewed risks, unanswered accountability questions, and undocumented decisions that create compounding exposure over time.

Accountability Gaps in AI Decision-Making

Many consequential decisions are now being delegated to AI systems — credit decisions, content moderation, hiring screens, medical triage support, fraud detection. When these systems produce harmful or incorrect outcomes, organizations frequently discover they lack clear accountability structures to respond.

- No single person owns the outcome

- The model’s decision logic is opaque or undocumented

- The affected individual has no clear recourse

- The vendor contract places liability elsewhere

This isn’t a failure of technology. It’s a failure of governance design.

Regulatory Pressure Is Growing Fast

The regulatory environment for AI is hardening quickly. The EU AI Act, the US executive orders on AI safety, sector-specific guidance from financial regulators, and emerging frameworks from data protection authorities all share a common demand: organizations must be able to explain, audit, and justify their AI systems.

That is, by definition, a governance requirement. And organizations that haven’t built governance infrastructure are discovering — often at the worst possible time — that they cannot meet it.

72%

of organizations have no formal AI governance framework

3×

more AI incidents occur in organizations without governance oversight

68%

of executives say AI accountability is unclear in their organization

The Five Governance Failures Behind Most AI Problems

When organizations experience serious AI-related problems — reputational damage, regulatory action, operational failure, or harm to stakeholders — those problems almost always trace back to one or more of the following governance gaps.

No clear ownership

No named individual or team is accountable for the AI system’s performance and its outcomes.

Poor data governance

Training or inference data is used without proper consent, provenance checks, or access controls.

Absent monitoring

AI systems are deployed but not continuously monitored for drift, bias, or unexpected behavior.

Missing documentation

No model cards, risk assessments, or decision logs exist to explain how the system works.

No human override

Consequential decisions are fully automated with no pathway for human review or appeal.

Unenforced policy

AI policies exist on paper but are not integrated into procurement, deployment, or performance review processes.

AI Governance vs. Traditional IT Governance: What’s Different

Many organizations try to manage AI through their existing IT governance frameworks. This is a reasonable starting point, but it is not sufficient. AI systems differ from conventional software in ways that require distinct governance approaches.

| Dimension | Traditional IT | AI Systems |

|---|---|---|

| Behavior | Deterministic — same input, same output | Probabilistic — outputs vary and can shift over time |

| Explainability | Logic is traceable in code | Decision logic is often opaque or statistical |

| Risk profile | Known failure modes, defined error states | Novel failure modes, emergent behavior at scale |

| Bias | Bugs are introduced by programmers | Bias is inherited from data, often invisibly |

| Accountability | Developer responsibility is clear | Responsibility is distributed across vendor, data owner, and deployer |

| Drift | Behavior is stable unless changed | Performance can degrade as data distribution shifts |

These differences mean that organizations need governance capabilities that don’t yet exist in most IT governance frameworks — including model documentation standards, continuous fairness monitoring, human-in-the-loop protocols, and supplier accountability requirements for third-party AI.

What Good AI Governance Looks Like in Practice

Good AI governance is not a compliance exercise. It is a management capability — one that enables organizations to use AI more confidently, more responsibly, and at greater scale. Here is what it looks like when it is working well.

1. A Clear Governance Structure With Named Owners

Effective AI governance starts with clarity about who is responsible for what. This typically means establishing a dedicated AI governance function — whether a committee, a Chief AI Officer role, or a cross-functional working group — with real authority over AI deployment decisions.

Each deployed AI system should have a named owner who is accountable for its behavior, its performance metrics, and its compliance with internal policy and external regulation.

2. Risk-Tiered Oversight

Not all AI systems carry the same risk. A recommendation algorithm for a content feed requires different oversight than an AI system that screens job applicants or flags medical images.

Mature governance frameworks use risk tiering — categorizing AI systems by the severity and breadth of their potential impact, and calibrating oversight requirements accordingly. High-risk systems get more rigorous review, more frequent audits, and stronger human oversight requirements. Lower-risk systems move faster.

Practical Example

The EU AI Act explicitly uses risk tiers — prohibited systems, high-risk systems, limited-risk systems, and minimal-risk systems — each with distinct requirements. Organizations building their own governance frameworks are increasingly adopting similar structures, even in jurisdictions where it isn’t yet legally required.

3. Model Documentation and Audit Trails

Every AI system deployed in a consequential context should have documentation that answers the following questions:

- What is this model designed to do, and what is it not designed to do?

- What data was it trained on, and how was that data sourced and validated?

- What were its performance metrics at deployment, including accuracy, fairness, and robustness?

- Who approved it for deployment, and under what conditions?

- What monitoring is in place, and what triggers a review or shutdown?

This documentation — often formalized as a “model card” or “system card” — is the foundation of accountability. Without it, organizations cannot explain their AI systems to regulators, courts, or the public when questions arise.

4. Meaningful Human Oversight

One of the most consistent findings in AI governance research is that “human in the loop” processes only work when they are genuinely empowered. A human reviewer who is processing hundreds of AI-flagged decisions per day, under time pressure, with no ability to override the system, provides the appearance of oversight without the substance of it.

Good governance designs human oversight to be meaningful — with enough time, information, and authority for the human to actually change the outcome when the AI is wrong.

5. Supplier and Vendor Accountability

Most organizations now deploy AI systems they didn’t build themselves. Foundation models, APIs, and AI-embedded software all carry governance implications that extend upstream to the vendor.

Strong governance frameworks treat AI procurement as a risk management activity. This means requiring vendor disclosures about training data, conducting third-party audits where feasible, including AI-specific clauses in vendor contracts, and maintaining the right to audit or terminate a vendor relationship when governance requirements aren’t met.

A Practical Roadmap: Building AI Governance From the Ground Up

Organizations that are early in their governance journey often ask the same question: where do we start? The answer is not to build a perfect framework before deploying anything — that approach is too slow and too disconnected from operational reality. Instead, the goal is to build governance incrementally, starting with the highest-risk deployments and expanding from there.

- Inventory your existing AI deployments.You cannot govern what you haven’t mapped. Start with a full catalog of AI tools, models, and systems currently in use across the organization — including shadow IT and vendor-embedded AI.

- Assess and tier by risk.For each deployment, assess the severity and breadth of potential harm if the system fails or behaves unexpectedly. Prioritize governance resources on high-risk systems first.

- Assign named ownership.Every AI system should have a human owner — a person who is accountable for its performance, compliance, and ongoing management. This sounds simple. In practice, it requires deliberate organizational decisions.

- Document what you have.For your highest-risk systems, create basic model documentation: purpose, training data, performance metrics, known limitations, and approval history. Build the habit before you perfect the format.

- Design or review human oversight processes.Audit existing human review workflows. Are they genuinely empowered to override AI decisions? Is the workload feasible? Is the reviewer given enough context to make a meaningful judgment?

- Establish a governance review cadence.Build recurring governance reviews into operational rhythms — not just at deployment, but quarterly, after significant model updates, and when regulatory requirements change.

- Invest in governance capability, not just policy.Policies without capability are theater. Pair your governance framework with training, tooling, and organizational roles that make compliance feasible and visible.

The organizations that will navigate AI transformation successfully are not necessarily those with the most advanced models. They are the ones that have built the institutional capacity to make responsible decisions about how those models are used.

The Governance Gap Is Also a Competitive Gap

It is worth pausing on a point that often gets lost in compliance-focused discussions: AI governance is not just a risk management investment. It is increasingly a competitive differentiator.

Organizations with mature AI governance can move faster in the long run — because they have built the trust and accountability structures that allow them to deploy AI in more sensitive contexts, scale successful pilots with less friction, and respond quickly when systems need adjustment.

Conversely, organizations that skip governance in pursuit of speed often find themselves doing the hard work later — under worse conditions, with more exposure, and with greater disruption to operations that have already scaled around ungoverned systems.

Strategic Insight

The governance question is not “how much oversight can we afford?” It is “how much governance do we need to deploy AI at the scale we want, in the contexts we want, with the confidence of our stakeholders and regulators?” Framed that way, the investment calculus changes significantly.

The Bottom Line

AI transformation is genuinely difficult — but the most common failure points are not algorithmic. They are organizational. They involve unclear ownership, absent documentation, inadequate oversight, and governance frameworks that haven’t kept pace with deployment speed.

The organizations that will lead in AI over the next decade are the ones that understand this now. They are investing in governance infrastructure alongside technical infrastructure — and they are treating accountability, transparency, and human oversight not as constraints on AI adoption, but as the conditions that make AI adoption sustainable.

AI transformation is a problem of governance. The sooner organizations treat it that way, the better their odds of getting it right.

CLICK HERE FOR MORE BLOG POSTS